ON ai

The Agent Stack Is Becoming Boring in the Best Way.

A small reading-list survey of agent memory, voice control, embeddings, and verification, and why the interesting shift is not smarter chat but more ordinary software.

It started with Chrome’s Reading List, which is not the grandest instrument for taking the temperature of an industry, but has the advantage of being honest. Bookmarks are aspirational; reading lists are more incriminating. They preserve the tabs that seemed important enough to defer, which means they are less a library than a drawer full of receipts from the current obsession.

Mine had become a small sedimentary layer of agent infrastructure. Amp’s Opus 4.7 note, dated April 25, 2026. Google’s Gemini Embedding 2 post, dated April 30, 2026. OpenAI’s realtime voice component, a reference implementation created in March and prepared in April. Anthropic’s managed agent memory docs, undated in the public page but very much of the present moment. A testing skill, a document design system, and, sitting oddly but usefully among them, a 2015 Information piece about the AWS diaspora.

This is not a neutral cluster. It is the sort of reading list one accumulates when the question has changed from “can the model do it?” to “what does the rest of the system need to become so the model can do it without turning the product into a haunted spreadsheet?”

The Amp piece is interesting because it describes a model that is better and less indulgent at the same time. Opus 4.7, they write, follows prompts more closely, fills in fewer gaps, researches more, and is less likely to silently generalize from one narrow request into every neighbouring problem. At first glance that sounds like a product manager discovering that the magic assistant has become slightly more bureaucratic, but the more precise version is that the boundary moved from model personality to task specification. If the model no longer papers over vague instructions with cheerful improvisation, the work has to supply clearer success criteria.

That is not a regression. It is a sign of the stack hardening.

The same pattern appears in OpenAI’s realtime voice component. The repo is deliberately not a general-purpose orchestration framework. It is a React/browser layer for voice-driven UI where the application owns the state, the tools stay narrow, and the visible change remains the UI’s responsibility. This sounds almost disappointingly modest until the alternative is considered: a voice agent roaming over a browser like a very confident intern with no filing system and excellent diction.

The important design choice is ownership. The assistant may express intent, call a tool, and speak in a friendly way, but the app decides what state exists and which actions are legitimate. There is a small adapter between model and product, and that adapter is where the dignity of the system lives. It is not glamorous, but neither is a door latch, and buildings would be worse without them.

Anthropic’s managed-agent memory docs make a similar move in a different register. Memory is not presented as an enchanted autobiographical faculty; it is a workspace-scoped collection of text documents mounted into a session as files, with access modes, API operations, version history, and audit trails. The agent reads and writes memory with the same tools it uses for the rest of the filesystem. This seems almost aggressively ordinary, which is exactly why it matters.

The obvious product story is that agents remember. The useful engineering story is that memory needs paths, permissions, versioning, concurrency, deletion, and recovery from bad writes. A memory system that cannot distinguish reference material from writable preference data is not memory so much as a slow prompt injection with a sentimental name. The docs even call out the risk directly: if untrusted input can write into a store, a later session may read that poisoned text as trusted context. In other words, the problem is not that agents forget. The problem is that sometimes they remember too obediently.

Google’s Gemini Embedding 2 post adds another layer: retrieval is becoming multimodal infrastructure rather than a text-only convenience. The model maps text, images, video, audio, and documents into a shared embedding space, with task prefixes for question answering, fact checking, code retrieval, search, clustering, classification, and anomaly detection. The marketing shape is broad, but the technical implication is specific: agents will increasingly need to retrieve from the same messy world humans use, not from a sanitised pile of Markdown.

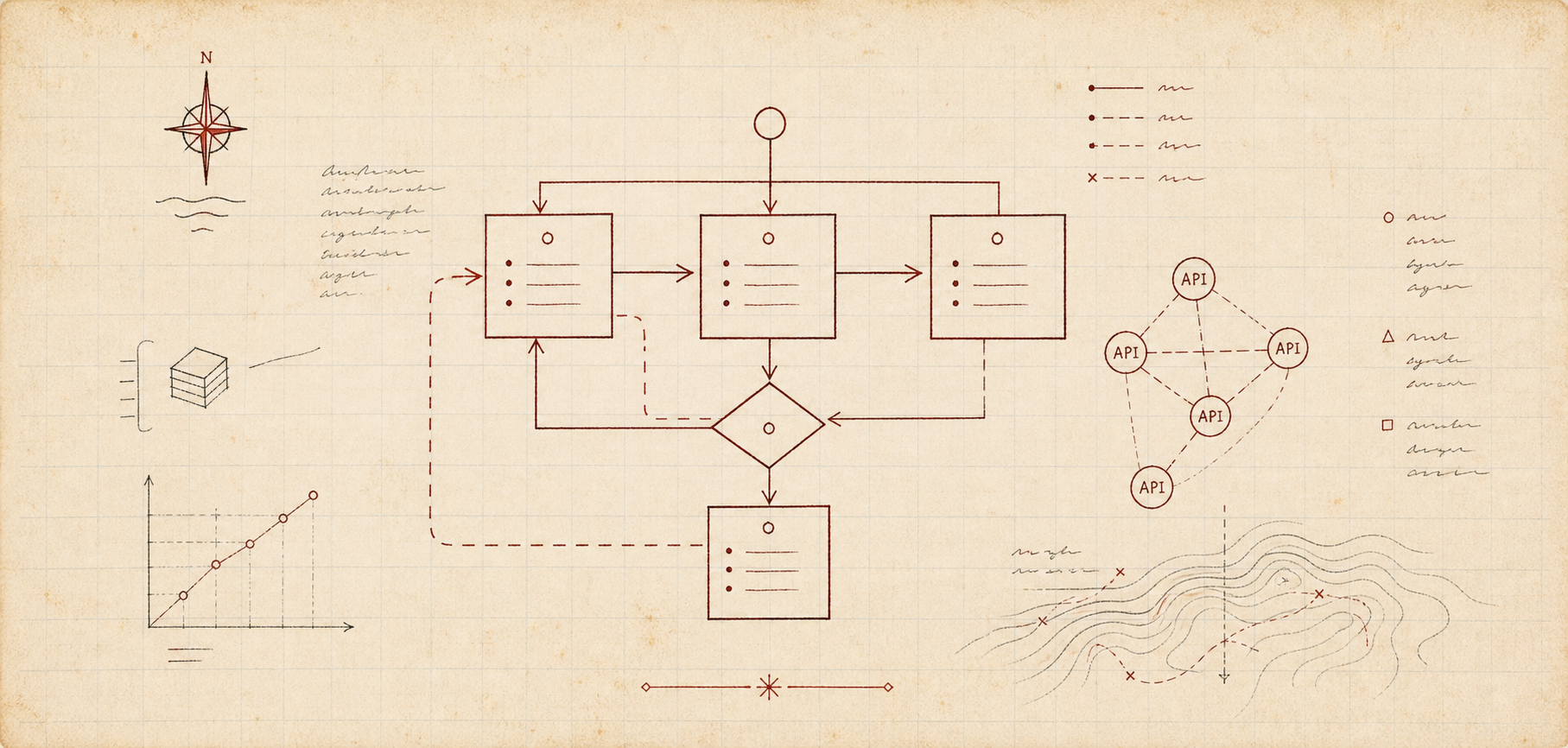

This is where the stack begins to look less like chat and more like software. A voice UI needs an action boundary. Memory needs an access model. Retrieval needs task-shaped indexes. Coding agents need tests and success criteria. Good model behaviour becomes useful only when surrounded by boring machinery that limits, checks, stores, recalls, and explains what happened.

There is a peculiar continuity here with the older AWS article. In April 2015, The Information described the “AWS diaspora”: people who had conceived, built, and sold Amazon’s cloud infrastructure scattering into Google, Twitter, and startups. The article was about people, but its deeper subject was operational culture. Cloud was not just a product category; it was a set of assumptions about scale, APIs, provisioning, reliability, and who should own which layer of the system. Once those assumptions lived in enough people’s hands, they travelled.

Agent tooling may be entering a similar stage, though the thing being exported is different. The old cloud diaspora carried mental models about elastic infrastructure. The agent diaspora is carrying mental models about bounded autonomy: tool ownership, memory hygiene, retrieval shape, verification loops, prompt contracts, UI state, and the surprisingly delicate matter of letting a model help without letting it become the application.

This is why the current moment feels less like a demo wave and more like a craft formation. The exciting work is not only in larger context windows or better benchmark scores, though those still matter. It is in the small disciplines that make the model legible to the rest of the system. Tell it what done means. Give it a way to check itself. Keep state where state belongs. Make memory auditable. Treat tools as product surface, not as a bag of shortcuts.

At a certain point, every important technology becomes boring enough to operate. That does not mean it stops being powerful. It means the power has found containers: file formats, APIs, conventions, runbooks, defaults, patterns, and the occasional stern warning in the documentation. The agent stack is starting to acquire those containers now.

Good. The magic was never the part we could rely on.