Field entry, 6 May.

It started with a requirement that sounded almost too reasonable: put the agent and service shape on Cloudflare and see how much of it still felt natural.

The trap in that sentence is “put.” A web app can be put almost anywhere. An agent turn is less obliging. It is half conversation, half job runner, half state machine, and yes, that is too many halves, which is usually how these systems announce that the architecture is lying to you.

The more precise version of the experiment was this: what happens if the Cloudflare objects are not deployment furniture, but the product boundaries themselves?

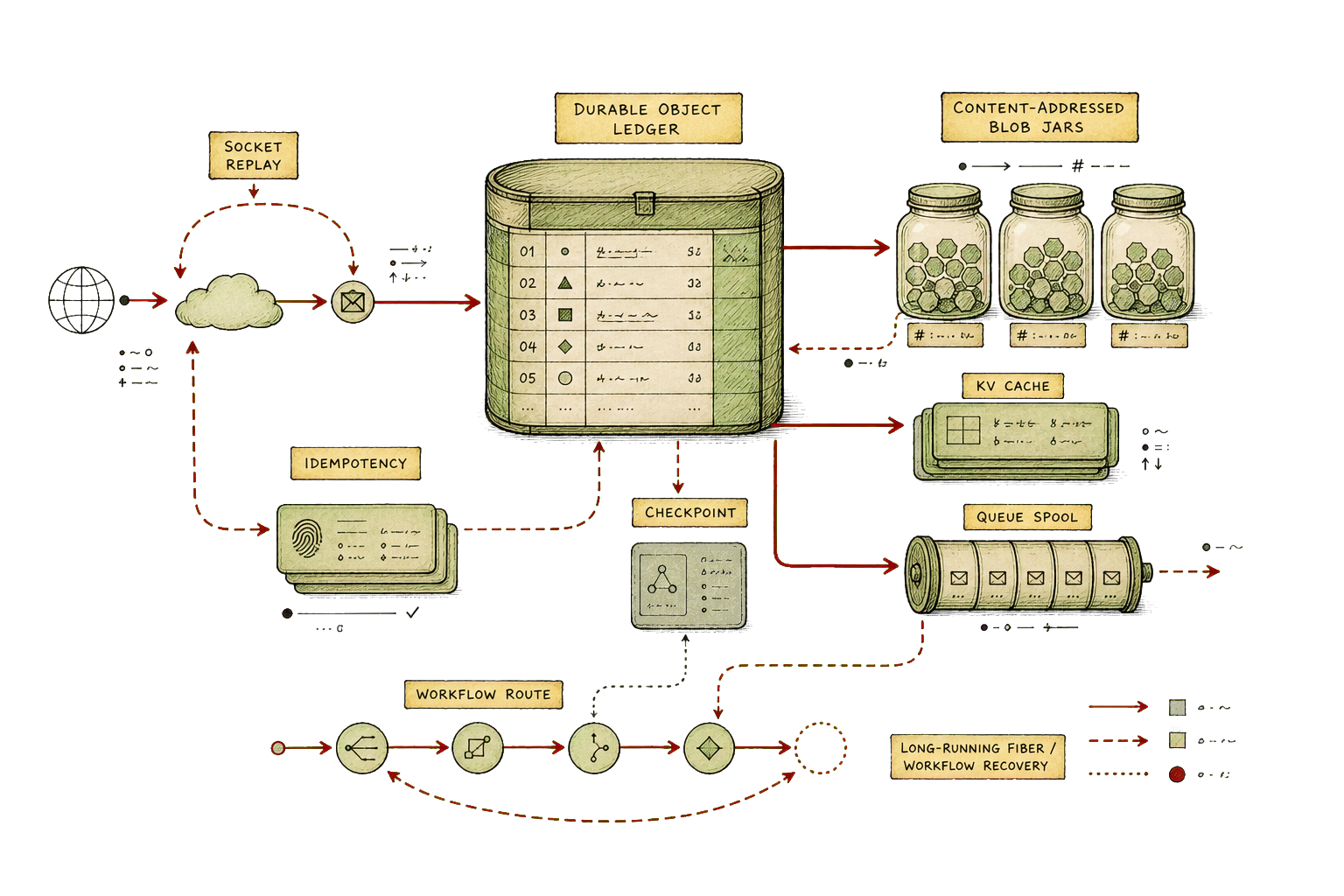

That framing changes the work immediately. Workers stop being a place to host code and become the visible edge of an authority. Durable Objects stop being an implementation trick and become the answer to the question “who owns this piece of continuity?” R2 stops being a bucket and becomes the right home for bodies rather than ledgers. Queues stop being plumbing and become a way to keep protocol callbacks out of the critical path. Workflows stop being background jobs and become a formal admission that some work needs retries, waits, and checkpointed progress.

Many workers, fewer mysteries

The experiment split the runtime into a dozen Worker boundaries: a web surface, an ingress hub, agent runtime, resource authority, workspace authority, inference authority, voice bridge, diagnostics tail, and several external protocol adapters. The exact names do not matter. The important thing is that each boundary had a reason to exist after the diagram was taken away.

The web Worker serves the application and forwards dynamic traffic inward after authentication. The ingress Worker owns browser-facing RPC, WebSocket entry, replay, turn reservation, idempotency, and routing. The agent Worker owns durable agent classes, browser tools, worker loading, research workflows, and provider-facing agent behavior. The workspace Worker owns file history and content. The resource Worker owns tenant configuration and resource state. The inference Worker owns model-call state and stream metadata.

This sounds like a lot of pieces, and it is. But the alternative is not simplicity. The alternative is one large service with several private governments inside it, each pretending the others are only helper functions.

Cloudflare made the split concrete because service bindings force a question early: is this an internal authority call, a public edge, a protocol adapter, or a browser-facing projection? That question is product work wearing an infrastructure hat.

Durable Objects are notebooks

The cleanest fit was the workspace authority: Durable Object SQLite for the ledger, R2 for the bodies.

File writes become iterations. Content becomes sha256-addressed objects. Checkpoints become snapshots with enough metadata to reconstruct the workspace without pretending a live process is still sitting there, patiently holding your place like a waiter with supernatural memory. That is the right kind of boring. The bytes live where bytes should live, and the decisions live where ordering matters.

The same pattern appears elsewhere. A thread Durable Object persists event logs, sticky events, projections, resolved approvals, and hibernating WebSocket attachments. A resource Durable Object owns tenant resource state, monotonically ordered changes, TTL expiry, replay, and idempotency, while KV is explicitly a cache rather than the source of truth. An inference Durable Object records stream metadata, session affinity, and request state while delegating provider retry, fallback, and caching policy to the gateway that is actually built to own it.

The lesson is not that every bit of state wants a Durable Object. The lesson is that every bit of meaningful state wants an owner, and Cloudflare makes that ownership pleasantly hard to hand-wave.

Sockets are not truth

One of the more useful corrections was treating WebSockets as delivery, not reality.

This matters because agent interfaces are extremely good at making delivery look like truth. If a token appears in the transcript, the work feels real. If the socket drops, the work feels lost. That is an understandable feeling and a terrible architecture.

In the Cloudflare version, stream state lives in Durable Object storage. The socket is a client attached to a sequence, not a sacred thread of consciousness. If the browser disconnects, it can replay from a durable point. If a shard needs to avoid becoming the broadcast ceiling, fanout has its own limits and state. The default fanout target in the case study was 200 connections per shard, with higher bounded limits in code, which is exactly the sort of number that makes a design less mystical.

Durable Objects did not remove the hard parts. They gave them proper labels: event log, projection, sequence, attachment, replay, checkpoint, resolved approval. That is frequently the first useful thing an architecture can do.

Long work needed two paths

Long-running work split into two shapes.

Some work belongs to the agent. It has conversational continuity, tool state, and a user-visible turn. For that, fibers and stashed progress fit naturally: active work can pause, recover after eviction, and continue with its own agent-local context. Other work is independent enough that it wants Workflows: multi-step jobs, research flows, approval waits, recovery tasks, and drills that need durable retries rather than a heroic request handler holding its breath.

That split is useful because “background job” is too blunt a category. A model turn, a deployment probe, a protocol callback, and a research workflow all happen after the initial request, but they do not have the same user contract. Some need transcript continuity. Some need at-least-once handling with idempotency. Some need exactly-once effects around a checkpoint. Some should never block the response path.

Queues fit in the same spirit. In the external task adapter, push notifications move through a queue so callback delivery does not become part of the task response path. That is not a glamorous piece of architecture, but it is the kind of decision that prevents a distant webhook from holding the core system hostage.

The numbers were small and useful

The most useful Cloudflare tests were the ones that refused to stay local.

Local emulation can prove a lot, but not everything that matters: hibernating sockets, idle reap, redeploy-during-work, long-stream behavior, and cost ceilings only become honest when tested against the real platform. The staging drills in this case study were small, but they had receipts.

An idle-reap drill waited three minutes and converged in 693 ms against a 15-second service-level target. A long-turn soak ran for roughly three minutes with 36 keepalives. A sandbox cost probe measured 0.0625 vCPU-seconds under a ceiling of 1. A redeploy-mid-flight drill recovered the expected event rather than inventing a second history.

These are not grand benchmark claims. They are more useful than that. They are operational facts about the failure modes the system actually cared about.

Other limits became part of the product shape: four active turns per project by default, 50 inline workspace changes before writeback needs a more durable path, 30-day idempotency retention for ordinary operations and longer retention for long-lived ones, 512 KiB voice frames, 80 voice frames per second, five-minute voice session TTL, ordinary stream timeouts measured in minutes and long-running stream paths allowed to stretch much further.

Numbers like this are not decoration. They are where a platform stops being a mood and starts being a contract.

The fit

Cloudflare fit best where the problem already wanted named stateful authorities.

Durable Objects fit thread continuity, resource state, workspace ledgers, inference stream records, and adapter session continuity. R2 fit content-addressed blobs and snapshots. KV fit read replicas and caches, as long as it was not allowed to grow ambitions. Workflows fit independent long jobs. Queues fit external delivery. Browser Rendering fit browser tasks that should not depend on a developer laptop. Tail Workers fit diagnostics because the system needed to observe itself as a graph, not as one process with a long log file.

The rough edges were also useful. Multi-Worker local development is not the same as the real edge. Hibernating WebSockets have real-platform behavior. Worker loaders and browser bindings need careful staging. Inference resume semantics need to be honest about what the provider and gateway can actually promise. A Worker graph needs deploy and dry-run checks or the binding topology becomes a rumor.

None of that means every agent belongs on Cloudflare. That would be the wrong lesson, and it would smell suspiciously like a slide deck.

The more useful conclusion is narrower: agent systems get clearer when every bit of state has a named durable home. Cloudflare gives you a strong vocabulary for that if you are willing to let the product boundaries follow the state, rather than forcing the state to follow a diagram drawn before the work began.